Resources for Operational Excellence

Using AI to Strengthen Critical Thinking for Continuous Improvement Work

Read More

How AI Chatbots are Changing the Future of Professional Learning

Read More

Embracing the Future: Why We Must Adopt AI Now

Read More

Top 10 Lessons Learned From Process Improvement Leadership

Read More

Lean Leadership Habits That Drive Organizational Excellence

Read More

Sustainable Training Routines for Operational Excellence

Read More

Quality Time with MoreSteam Podcast

- Episode 51: Leadership Under Pressure with Adelaido Godinez

- Episode 50: The Daily Work of Leadership with Jon Dario

- Episode 49: Most Meetings Shouldn't Happen with Evan Unger

- Episode 42: Change Management That Actually Works with Kim Scibelli

- Episode 37: The Efficiency Engine That Powers Make-A-Wish with Taylor Pack

- Episode 36: Making Things Better For Everyone with Josh Howell

Watch the Latest Webinars

- Building a Sustainable Lean Culture From Strategy to Daily Practice

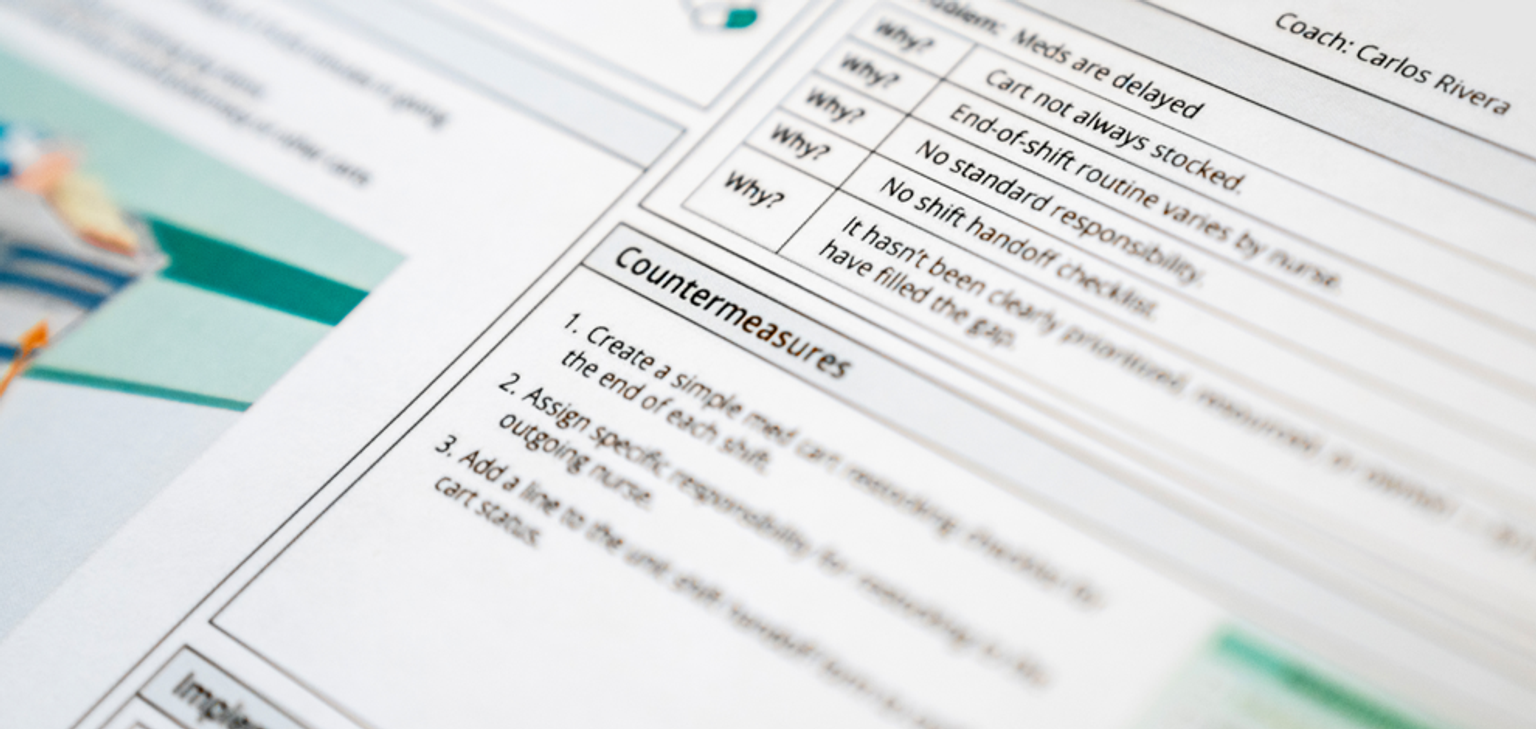

- Making A3 Problem Solving Accessible, Actionable, and Real

- Driving Strategic Alignment with the Lean Management System

- Modeling Risk with Confidence Using Monte Carlo Simulation

- Good Lean Six Sigma Projects Start with Good Problem Statements

- First Things First: Verifying Assumptions Before Hypothesis Testing

Explore EngineRoom

- Get to Know EngineRoom Data Analysis Software

- MoreSteam and ASQ Strengthen Partnership to Promote EngineRoom®

- The Essential Data Analysis Software for Operational Excellence Teams

- Exploring the Power of Response Surface Methodology

- The Future of RCA: Machine Learning-Driven Root Cause Discovery

- The Top 5 EngineRoom Features That Make Data Analysis Easy

Learning Process Improvement

Field Notes from OpEx

Upcoming Events

Lean Six Sigma Resources for Continuous Improvement and Operational Excellence

Welcome to MoreSteam's Lean Six Sigma Resource Library—a curated hub of content for professionals focused on process improvement, operational excellence, and continuous learning. Whether you're starting your Lean Six Sigma journey or advancing an enterprise-wide initiative, this page offers a diverse collection of tools and insights to help you solve problems and drive measurable impact.

Explore expert-led webinars, in-depth case studies, white papers, blogs, and podcasts—all designed to help you apply Lean Six Sigma principles in real-world settings. Learn how organizations across industries are using tools like value stream mapping and root cause analysis to reduce waste and improve quality. From foundational concepts to emerging trends in AI and digital transformation, these Lean Six Sigma resources support your growth as a problem-solver and leader in operational excellence. Whether you're looking for inspiration, training, or tactical guidance, the MoreSteam Resource Library is your go-to destination.

Many of these Lean Six Sigma resources also feature tools developed by MoreSteam, such as EngineRoom for data analysis and TRACtion for project tracking. Whether you're validating a process map or leading a team through DMAIC, these OpEx resources provide practical support for continuous improvement.